Are You Your Brain?

A Reason to Question the Brain-Identity View

Many people assume that they are their brains, or perhaps some part (or state) of a brain. The thought is understandable. Brain activity correlates tightly with conscious experience, after all. Damage the brain, and consciousness changes. Stimulate the brain, and experience changes again. We can explain these correlations in terms of your identity to a brain.

However, there is a reason to be skeptical of the inference here. I’ll explain why in three brief steps.

Step 1: Separate Causes from their Effects

First, start with a simple distinction. A cause of something does not automatically have the properties of its effects.

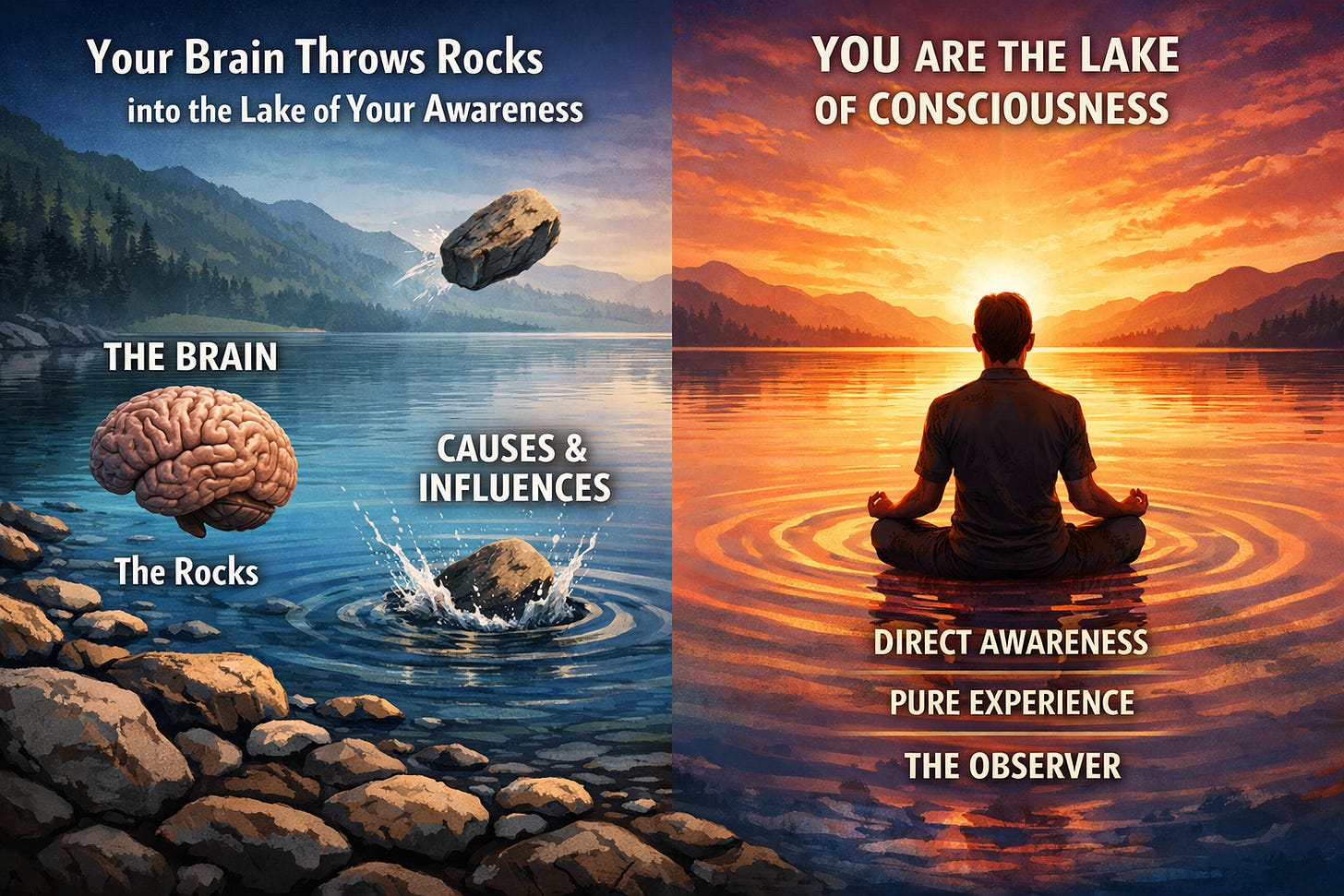

To illustrate, suppose you toss rocks into a lake. The lake produces ripples. But the rocks do not themselves ripple. The lake ripples. The rocks play a causal role, but they are not the thing that undergoes the change. It would be a mistake to assume that because the rock causes ripples that the rock itself ripples.

In the same way, just because brain states may cause changes in states of consciousness, it does not follow that the brain states themselves are states of consciousness.

Is the bearer of consciousness the same as the things that causes or affect consciousness? That question is not answered merely by identifying brain states as causes of states of consciousness.

Step 2: Notice Your Own Consciousness

Second, you are already able to recognize something that is conscious: yourself. You do not need to inspect your brain, or posit some bearer of consciousness “out there,” to know that you are conscious. Your awareness of your own consciousness is immediate.

Consciousness, in your own case, is not a theoretical posit and not the result of inference. It is given directly. Just as you do not need to look at the rocks to see the lake ripple, you do not need to look at brains to know that you are conscious. You know this by direct awareness of yourself as conscious.

Step 3: Razer off Unnecessary Complexity

From here, we could still posit an identity between you and your brain. But I think this posit has two problems.

First, I think brain-identity mismatches categories. Brains are extended objects in space, which can occur as contents of consciousness. But the consciousness itself transcends the contents that occur within it, whether they are spatial contents in visual imagery, thoughts, or feelings. On my view, treating brains as conscious is like treating rocks as rippling. It’s a category mistake.

Second, even if the categories could be mixed somehow, my second objection is that positing identity multiplies complexity beyond necessity. We already recognize something that is conscious: ourselves. We could posit an identity relation between you and your brain (or parts or states of your brain). But this would be like positing in addition to the lake and the rock, an identity relation between them. The posit adds unnecessary complexity to our total theory of things.

To be clear, reducing reality to fewer categories is not an advantage if (i) we have independent awareness of the respective categories (rocks, lakes, brains, and conscious beings), and (ii) the reduction itself requires an extra, unnecessary posit (e.g., an identity statement) in our theory. The simpler theory is more likely, other things being equal.

Addendum: Identity, Parsimony, and Direct Data

I’m adding this section based on comments below.

A helpful follow-up question concerns how identity claims interact with parsimony. The worry is this: if identity statements reduce the number of things in one’s ontology (as with Hesperus = Phosphorus or water = H₂O), then shouldn’t identifying consciousness with the brain make a theory more parsimonious rather than less?

There is an important distinction here.

Parsimony is not fundamentally about counting labels or identity statements. It is about the complexity of a theory relative to its data. A theory becomes less probable when it introduces additional independent posits beyond what is already given. That’s because each posit is another place where the theory can go wrong.

Opaque contexts matter. In the case of Hesperus and Phosphorus, what is directly given in experience are appearances: a bright object in the evening sky and a bright object in the morning sky. The underlying object (Venus) is not part of the direct data. A theory that posits two unseen things is more complex than one that posits one unseen thing. Here, identity reduces complexity because both relata lie beyond the data.

The situation with consciousness is different. Your conscious experience—and you, as the subject of that experience—are not theoretical posits inferred from appearances. They are part of your direct data. By contrast, brain states are known indirectly, via instruments, testimony, and theory. When one posits (i) a physical entity behind perceptual appearances and then (ii) an identity between that entity and the conscious subject, one has added structure that goes beyond what is directly given.

Even if the identity reduces the number of kinds, it still increases the number of theoretical commitments beyond the data. And it is this added structure (not mere kind-counting) that drives up complexity.

The comparison with water = H₂O helps clarify the point. We can be directly aware of our experience with water without being aware of its molecular structure, and our experience is of course not the same as water itself. Here the referent of “water” (the molecular structure) is not known directly, and so identifying that referent with something we independently know about indirectly simplifies our theory. But unlike H20 (which we do not know directly), consciousness is something you can know directly.

So the central point remains: identity claims can reduce complexity only when both sides of the identity lie beyond the data.

Conclusion

On this analysis, your brain throws rocks into the lake of your awareness. Neural activity shapes, constrains, and influences experience. But it does not follow that neural activity is what experiences. It remains at least possible that you the lake, not the rocks.

I don't think that's how identity statements and considerations pertaining to parsimony (or ontologies) work. E.g. pre and post my discovery that Hesperus = Phosphorus (or that Energy and Mass are equivalent or two aspects of one stuff (which we might call mass-energy)), my ontology becomes more parsimonious, not less. Likewise, if physical stuff = conscious stuff (or perhaps more properly, if "the physical aspect and the conscious aspect are two aspects of one and the same thing/stuff", then my ontology becomes more parsimonious. We count things in the ontology, and that'd what determines its degree of parsimony. Not floating identity statements thingamajigs.

Recomendaría que revise la teoría del cerebro como filtro transmisor, de William James o de Henry Bergson ...también, aprovechando el diálogo, estudiar algo aunque sea superficial de los datos empíricos del alma, como experiencias cercanas a la muerte, recuerdos de aparentes vidas pasadas y mediumnidad.

Es un desperdicio que filósofos con talento no aborden esos temas por ser heterodoxos o marginados de la imagen científica estándar